9.2007 – 3.2010

Microsoft Adaptive Keyboards

MY ROLE

In 1999 the Applied Science Group began investigating inexpensive adaptive hardware solutions. As they focused on hardware innovation, starting in 2007 I focused on the interaction model, prototyping contextually relevant software experiences from gaming to CAD to Office applications, articulating opportunities for Microsoft and PC Hardware.

Display technologies at the time had limited viewing angles, were thick, required power and were costly. The Applied Science group was committed to overcoming these challenges in hopes of adaptive hardware at a price point the market could tolerate. At the same time, there were no features in Windows Vista to develop adaptive experiences. It was the classic chicken / egg situation. Build the software API first and let hardware and applications follow or build the hardware first with an exclusive experience followed by a software API and other applications. A tough business question, but an intriguing area to explore for several of us in PC Hardware.

Basically ourselves. It was an internal focused research activity within the PC Hardware business.

For this research effort were to…

- Identify applications with the most opportunity, prototyping specific scenarios which highlight the value.

- Influence Windows planning and explore new business opportunities.

- Create a hardware and software development kit to further explore opportunities in higher fidelity.

Ideate. Prototype. Rinse and repeat.

Explorations like this can either start small and go deep, developing just one idea or go broad, developing several ideas. Going too broad can lead to paralysis, making it hard to find a concept worth pursuing. Going too deep can miss an important opportunity. To focus this exploration, I worked towards identifying categories of applications that had a higher level complexity. Applications in this group had important functions buried deep within menus and tons of tools or keyboard shortcuts to remember. The premise was to find the applications where we waste time searching for functionality. To minimize disruptions in our ‘flow’ one theory was if functionality was contextualized the experience could be simpler. The other theory was around surfacing background activities we want to monitor.

Transitioning from theory to actually making things is a crucial step. Storyboarding is often where I start because I can explore a broad range of ideas without spending too much time on one. Over the years, I’ve also found that through sketches rather than written scenarios, a stronger reaction can be elicited and more often a common understanding be achieved. Together with an external vendor storyboards were generated. Afterwards, I focused in on the most promising ideas.

With a rigged overhead projector and a keyboard painted completely white, I continued to explore these ideas at a higher fidelity. Starting first with the most basic keyboard interactions, this prototype helped me demonstrate modal functionality with the SHIFT, CTRL and ALT keys. Toggling the legend between uppercase and lowercase characters, special characters and key combo functions was significant but only scratched the surface of possibilities. Richer experiences like re-assignable hotkeys, glance-able interactive content outside of the keyset and contextualized application specific functionality were also possibilities. With the value of adaptive keyboards becoming clear the questions shifted to how important was color? What display resolution was needed? Was touch capability necessary and how responsive did it need to be? I explored these questions as well as investigated the pros and cons of smaller standard size screens and non-standard size screens in the areas outside of the keyset. Whether projecting video, images, PowerPoint presentations of interactive applications built with Flash, this prototyping method was extremely versatile and efficient.

Using an overhead projection to explore legend morphing and glanceable content

Using an overhead projection to evaluate screen sizes and hotkeys

I later used a chroma key technique on an actual keyboard to composite videos in post-production with After Effects. This technique was another efficient method for bringing interactions to life. Watching an interaction is a tease. Often the real challenges are only discovered when prototyping something much closer to the real thing. Higher fidelity prototypes were needed so, I began concealing displays and touch screens within keyboards. Using Flash and later WPF, I was then able to build applications which extend across two screens. These fully interactive applications took more time to create, but were worth it. These applications also ran on a Microsoft Surface table. Using the Surface table with a clear 3D printed keyboard, Meng Li, an intern I helped mentor further explored adaptive experiences in 2008.

This work inspired form factors with standard and non-standard size displays above the keyset. It also raised new questions about viewing angles, something which would be important if this were ever ‘productized’. The most exciting opportunities for several of us were fully adaptive keyboard experiences though. Since most of the prototyping to date was application agnostic, rich adaptive application experiences were next to explore.

Using chroma key techniques to prototype touch screen interactions

Using embedded touch screens and Surface table to prototype

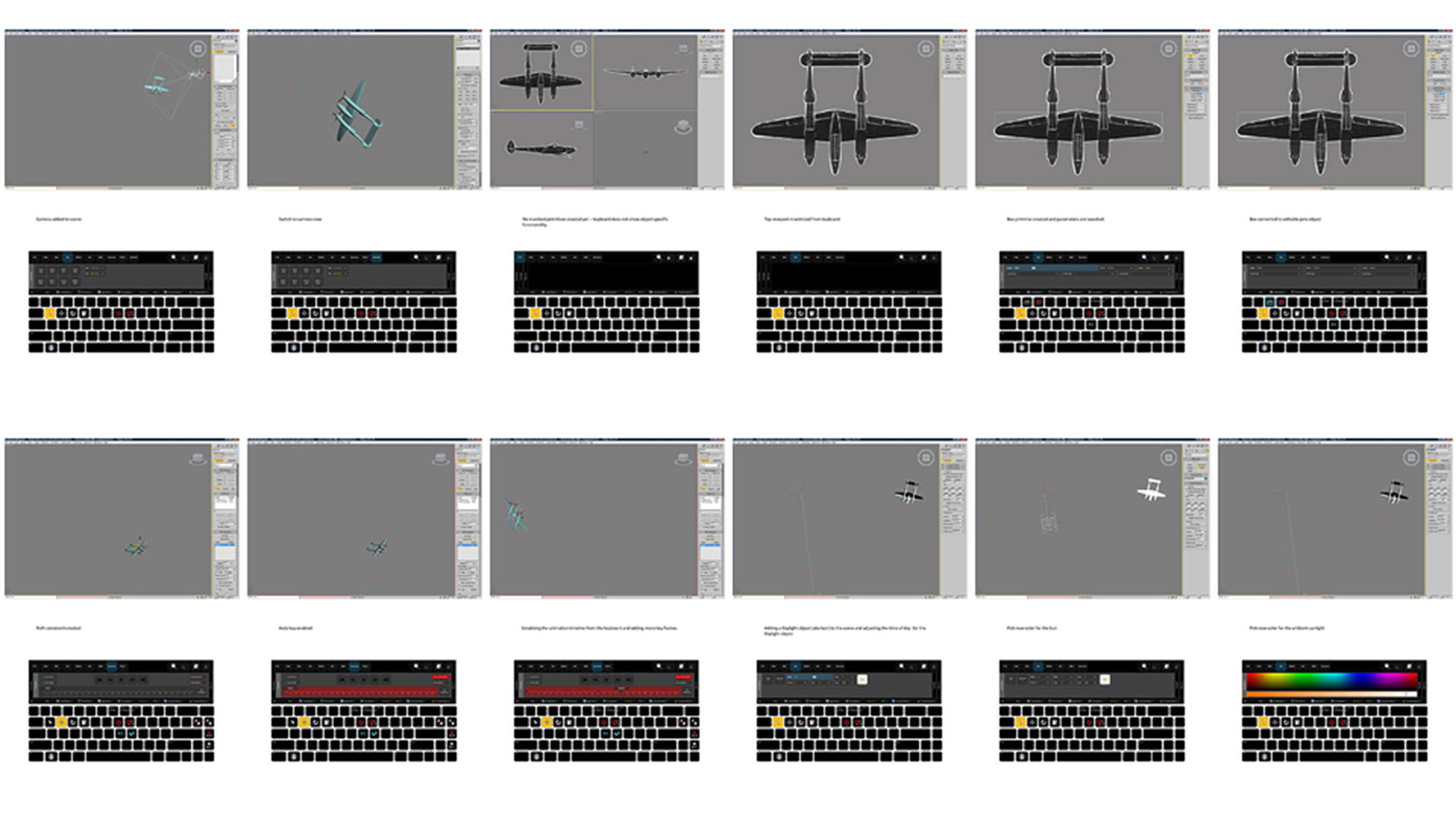

The investigation started with Microsoft’s Office and then dove into third party applications, like World of Warcraft, 3D Studio Max, Maya, and Visual Studio. For each application, I spent couple weeks submersing myself to articulate an adaptive scenario. As I became familiar with each app, I transitioned to breaking down particular tasks with wireframes. Capturing a screen and visualizing contextualized sets of functionality helped me identify workflows to later prototype. Without any guilt, I can actually say “I played World of Warcraft for work” and enjoyed every minute of it.

Wireframing adaptive application experiences

Turning these wireframes into prototypes we could step through helped us better understand the value of each scenario. The demonstration of rich adaptive application experiences opened doors with Windows planning and kicked off business partnership investigations too. Nothing was real yet, but things were interesting enough to spin up a larger team to develop a working hardware and software dev-kit.

As the larger team was spinning up, I began delving into WPF and Silverlight. I quickly realized how amazing XAML and C# were for prototyping interactions. With Expression Blend and ‘enough’ coding know how, I was able to explore functionality, layout and animation. I was ultimately responsible for developing the keyset’s WPF custom controls as well as a fully functional sample application shown in this video. The application when in focus extended contextual functionality to the keyboard and the touch screen above the keyset. With this dev-kit it was easy to create other rich experiences.

Functioning adaptive development kit prototype

In 2010 Microsoft shared the adaptive keyboard dev-kit with a group of students and asked them to present their ideas at the User Interface Software and Technology (UIST) symposium. The students could create whatever they imagined and what they did was truly amazing. This research was never ‘productized’ for several reasons, but it definitely was an interesting space to explore.